Young people and their parents will be asked to get over their embarrassment of discussing the sharing of nudes amid warnings the sharing and collecting of nudes has become commonplace in UK schools.

A new nation-wide campaign launching today (June 17) aims to help open a dialogue between parents and teens amid warnings the sharing and soliciting of nudes is becoming “normalised” among young people.

The campaign, called ‘Think before you share’, also warns young people about the pitfalls of sharing their own and others’ explicit images.

These images and videos can quickly get out of hand and can even end up being shared by strangers on dedicated child sexual abuse websites.

It comes amid warnings that sending and soliciting nudes is becoming “normalised” in UK schools.

The campaign, which has been launched by the Internet Watch Foundation (IWF) and supported by new research from the International Policing and Public Protection Research Institute (IPPPRI - formerly PIER – Policing Institute for the Eastern Region) part of Anglia Ruskin University, will help young people, parents and teachers to “talk about it”.

The research warns that the taking and sharing of nudes has become normalised among young people in schools, and that in some cases groups of pupils, mainly boys, are engaging in a “football card collection culture” of nudes of their female peers.

IPPPRI also warns that pupils are being exposed to unsolicited “dick pics” online on a “very regular basis”.

The IWF’s campaign will help young people understand the issues and warn of the harm that can come from this imagery being shared.

It will also offer help for parents in broaching this difficult topic, and assistance for anyone having imagery used against them in a range of situations, including sexually coerced extortion, or sextortion, attacks.

The IWF, which is the UK’s front line against online child sexual abuse imagery, is launching the campaign in response to increasing proliferation of imagery which has been captured on a device by the child or young person themselves.

Most of this so-called self-generated child sexual abuse imagery discovered by the IWF features girls aged 11 to 13.

The campaign’s messaging was developed alongside staff, pupils, and parents.

An Assistant Principal at a UK school* which helped the IWF to develop the campaign, warned they have received reports of pupils collecting and soliciting nude imagery of their peers.

He said: “Young people are acutely aware of the risks of digital devices and social media, use, which has become normalised to an extent.

“Shame is not a starting point to begin these discussions with young people. We need to understand what the barriers are to them disclosing incidents.

“Schools have a lot to learn from young people. We know young people make mistakes, but it is not for us to demonise that. The school’s role is to create a positive culture.”

He said the Covid 19 pandemic may have accelerated young people’s use of the internet, and warned that the harms from the abuse of imagery online are likely to get worse unless more is done to help.

He said: “I think more young people will end up hurting themselves if we don’t do more to help them. There is a tragic inevitability it will get worse.”

Research from IPPPRI has found the sending and receiving of nudes among school pupils has become increasingly normalised among young people.

One secondary school teacher told researchers: “Most girls had received unsolicited naked pictures, dick pics from boys”.

IWF analysts see how this imagery can quickly get out of control and end up being harvested by sexual predators and shared on dedicated child sexual abuse websites on the darkest corners of the internet.

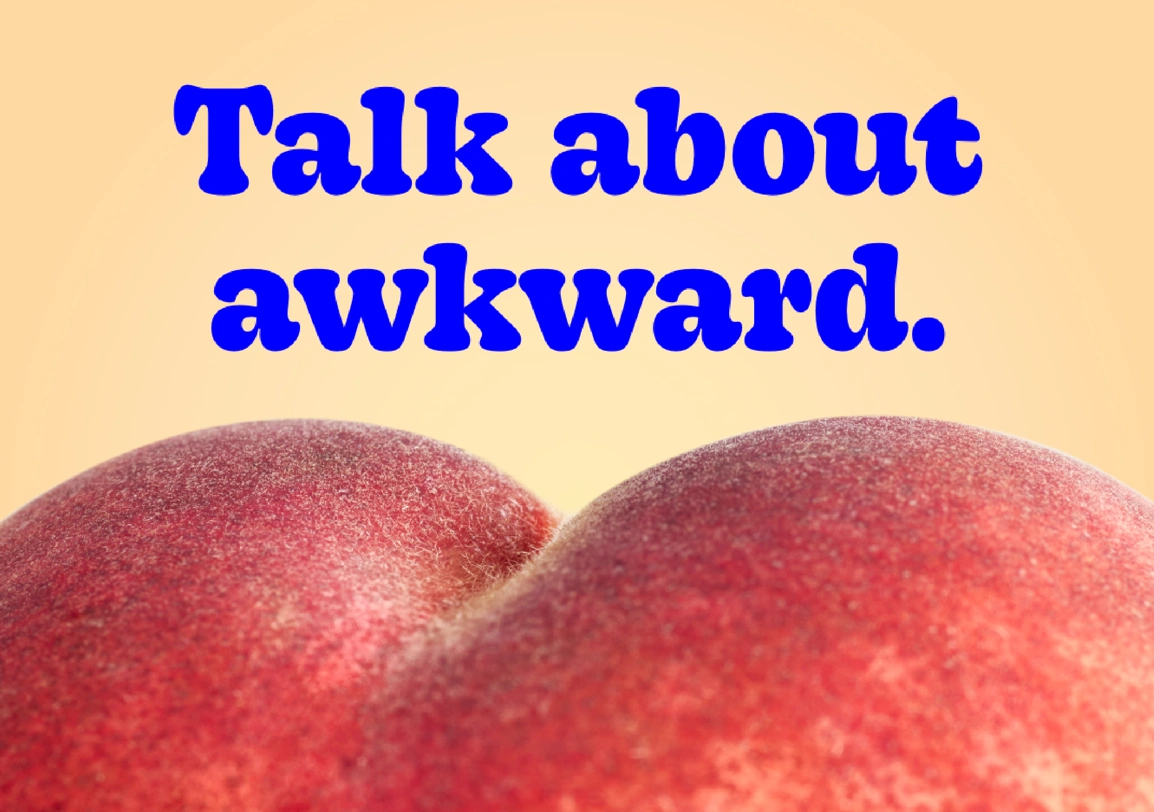

A social media campaign, including videos on TikTok, YouTube, and Snapchat, will deploy “Cheeky” imagery, including suggestive images of fruits and vegetables, to help deliver the message to young people.

Parents and carers will also be targeted on social media, as well as via radio and podcast ads from Comedian Diane Morgan, star of Cunk on Earth, Motherland, and Mandy.

Susie Hargreaves OBE, Chief Executive of the IWF, said: “The amount of child sexual abuse imagery on the internet is ballooning every year, and we are finding criminals running dedicated commercial child abuse sites are harvesting imagery from wherever they can to cater for this horrifying demand.

“These images can and do get out of control once they are on the internet. They can be used to shame, embarrass, bully and also so easily fall into the hands of criminals and abusers.

“Children and young people need to know these people are out there. They need to know what could happen if their images get shared out of the original circle of trust.

“They also need to know they are not alone, and that we, and others, are on hand and ready to help them if they find their imagery is being spread online, or if they fall victim to sextortion scams, threats, or intimidation.”

Professor Samantha Lundrigan, Director of IPPPRI said: “We’re pleased to support our partners at the IWF with our research once again. This campaign, informed by our findings, will help young people, parents and teachers deal with what is a very real and critical issue affecting every young person with access to the internet, in every country worldwide.

“We spoke with 307 children who shared their experiences of growing up in a digital world. Many described receiving unwanted sexual images and some commented that it has become normalised and part of their lives. Children told us that they had been targeted for sexual images via the social media and gaming sites that they use on a regular basis. Analysis of dark web chat forums also illustrated that offenders find it easy to access children via these sites.

“We’ve now published recommendations based on our research. Above all else, we found that talking about this issue with young people is key and it needs to happen quickly. We know that the use of smartphones and the internet is here to stay, so it’s about how we make this part of education, conversation and day-to-day awareness.

“We also spoke to parents and teachers who must also make themselves aware of the tools and guidance out there to help them, how to report nude images online, and how offenders are reaching young people in the first place – often via the platforms they use as part of every day life. If we give young people the tools they need to keep themselves safe, we’re doing everything to help them understand the risks involved in sending and receiving nude images.”

Children who are facing threats online from criminals trying to extort money or imagery from them using the threat of publishing or sharing nudes can take steps to have the imagery removed from the internet, or pre-emptively blocked before they can be uploaded.

Report Remove is a service run by the IWF and Childline which empowers young people to stop their imagery being shared on the internet and take action against threats of sexual extortion.

Find out more about the campaign at thinkbeforeyoushare.org

The public is given this advice when making a report to iwf.org.uk/report:

- Do report images and videos of child sexual abuse to the IWF to be removed. Reports to the IWF are anonymous.

- Do provide the exact URL where child sexual abuse images are located.

- Don’t report other harmful content – you can find details of other agencies to report to on the IWF’s website.

- Do report to the police if you are concerned a child may be in immediate danger.

Do report only once for each web address – or URL. Repeat reporting of the same URL isn’t needed and wastes analysts’ time.

Find out more about Report Remove here.

*The Assistant Principal asked to remain anonymous to protect the identity of the school.